Web Scraping in NodeJS Is Growing in Adoption 2024

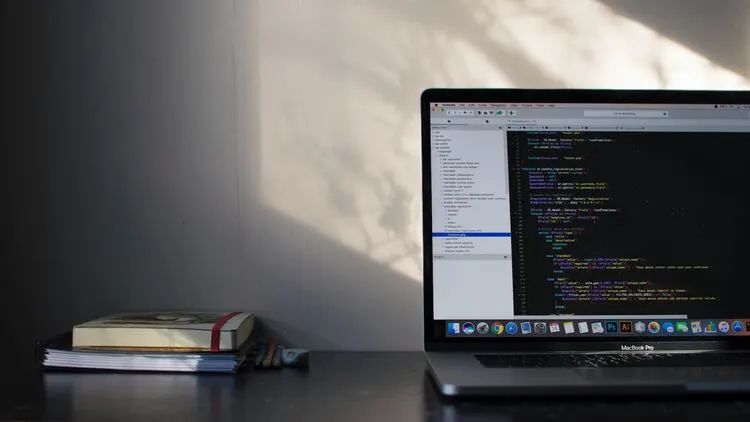

NodeJS, the popular JavaScript runtime, has seen notable growth in data extraction uses over the last few years. So let’s talk about NodeJS web scraping! Below, we’ll see what makes it an efficient option for such tasks and discuss some of the challenges developers may encounter.

Benefits of NodeJS for Web Scraping

NodeJS is the fastest-growing language for extracting data from the web. That can be attributed to a number of reasons, starting with its ability to handle asynchronous and non-blocking I/O operations. Namely, it allows simultaneous execution of HTTP requests, database queries, and file operations. That way, it ensures a fast and efficient web scraping process.

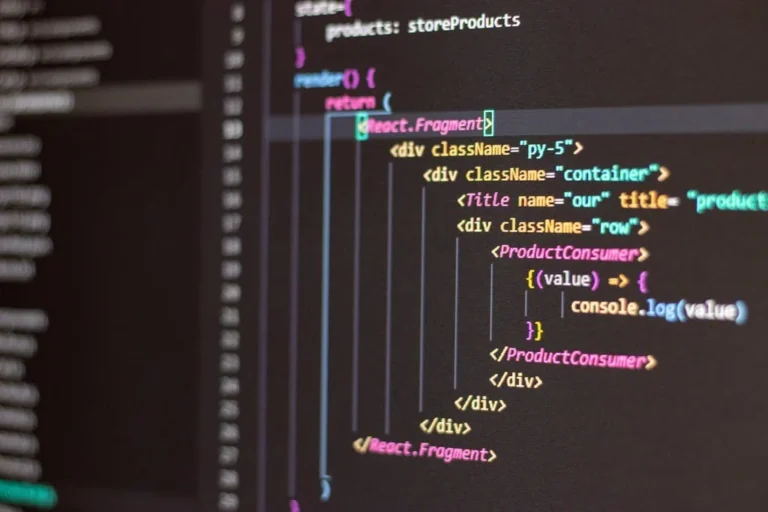

Moreover, it has a vast ecosystem of open-source libraries, like Puppeteer, Cheerio, and Axios, that can help with complex scraping tasks. They automate processes like sending HTTP requests, manipulating the Document Object Model, and parsing HTML.

Another reason for the growing application of NodeJS web scraping is the language itself: JavaScript. As JS is one of the most used languages among developers, they rely on their knowledge and experience to ease the learning curve. Consequently, anyone starting with Node can benefit from many tutorials, guides, and other resources, as well as a large community ready to assist.

NodeJS is also a reliable option for scaling up your web scraping projects due to its event-driven architecture that allows for the handling of concurrent connections. It has an event loop mechanism that eliminates the need to create a new thread for each connection, making it very efficient.

These are just a few of the benefits NodeJS offers. But even with the fastest-growing language for web scraping, there are some issues you might run into.

Challenges for Web Scraping in NodeJS

If you get blocked while web scraping in Node, it’s probably because of one of the following reasons:

- Rate limiting: Many websites ensure their safety by enforcing rate limits to prevent being overwhelmed by excessive requests. You can implement strategies to avoid such behavior, like slowing down request rates or using proxies to distribute the requests among different IPs. Otherwise, your scraper is likely to get blocked.

- IP blocking: Most online security systems assign a score to your IP as soon as you make a request. It’s based on your reputation history, known association with bot activity, and geolocation. That may get your scraper blocked, but it’s easily avoidable with the help of premium rotating proxies.

- CAPTCHAs: These challenges aim to distinguish humans from bots and are getting increasingly more efficient. Trying to solve them will only slow you down, and employing solving services will end up being quite expensive. That’s why the best course of action is to avoid triggering them using headless browsers to simulate human-like interactions with the site.

- HTTP headers analysis: The request headers, especially the User-Agent, contain information that can easily give away your scraper. Therefore, you need to use real, correctly formed, and matching headers to avoid raising any red flags.

As you can see, these and many other obstacles stand in the way of your scraper. Fortunately, there’s a way to avoid all hassle and effortlessly extract the data you want.

Use a Web Scraping API

Building a web scraper in NodeJS can be easy with the right tools. ZenRows is a web scraping API that can extract data from any website. It has advanced features to bypass all anti-bot measures, like WAFs, CAPTCHAs, user behavior analysis, and more.

Its toolkit includes the best residential proxies on the market, JavaScript rendering, headless browsers, and geo-targeting. With all this and more at your disposal, your scraping project will undoubtedly be a success. You’ll get 1,000 free credits upon registration, so you can see how much time and effort it can spare you.

Conclusion

NodeJS is undeniably going strong in the web scraping world, and there are good reasons for that. Its efficiency, diverse ecosystem, event-driven architecture, and quick learning curve make it possible for any scraping beginner to catch up quickly.

Keep in mind that extracting web data using any language poses challenges. Fortunately, there’s always a solution. ZenRows’ web scraping API can handle all the hard work while ensuring you get the data you want.